Clinicians and engineers in Oxford have begun using artificial intelligence alongside endoscopy to get more accurate readings of the pre-cancerous condition Barrett’s oesophagus and so determine patients most at risk of developing cancer.

In a research paper published in the journal Gastroenterology, the researchers said the new AI-driven 3D reconstruction of Barrett’s oesophagus achieved 97.2 % accuracy in measuring the extent of this pre-cancerous condition in the oesophagus in real time, which would enable clinicians to assess the risk, the best surveillance interval and the response to treatment more quickly and confidently.

The research was supported by the NIHR Oxford Biomedical Research Centre (BRC), through its cancer and imaging themes.

Barrett’s is a pre-malignant condition that develops in the lower oesophagus in response to acid reflux. There is a less than 0.1-0.4% risk per year of developing cancer with normal Barrett’s oesophagus – or one in 200 patients. However, that risk increases with the extent of Barrett’s lining.

Clinicians use a system called the Prague C&M criteria to give a standardised measure of Barrett’s oesophagus. This uses the circumferential length of the Barrett’s section and the maximum extent of the affected area. This score roughly determines the level of risk of developing cancer and how often the patient needs to be surveyed by an endoscopist, usually every five years for low-risk cases and two to three years for longer Barrett’s segments.

Oxford University Hospitals (OUH) NHS Foundation Trust has a cohort of around 800 patients with Barrett’s who have periodic endoscopic surveillance.

OUH Consultant Gastroenterologist Professor Barbara Braden, together with Dr Adam Bailey, oversees a large endoscopic surveillance programme for Barrett’s patients at OUH. She says the quality of the endoscopy is very dependent on the skill and expertise of the person carrying out the procedure.

“Until now, we have not had any accurate ways of measuring and quantifying the Barrett’s oesophagus. Currently, we insert the scope and then we estimate the length by pulling it back,” said Prof Braden.

“We asked colleagues from the Department of Engineering Science – Prof Jens Rittscher and Dr Sharib Ali – whether they could find a way to measure distances and areas from endoscopic videos to give us a more accurate picture of the Barrett’s area and they came up with the brilliant idea of three-dimensional surface reconstruction.”

Prof Braden, of the University of Oxford’s Translational Gastroenterology Unit, based at the John Radcliffe Hospital, added: “Currently, you have to have a great deal of experience to know how to spot the subtle changes which indicate early neoplastic alterations in Barrett’s oesophagus. Most endoscopists don’t encounter an early Barrett’s cancer that often. So, instead of teaching thousands of endoscopists, by applying deep learning techniques to endoscopic videos you can teach a programme.”

The Oxford study is using technology to reconstruct the surface of the Barrett’s area in 3D from the endoscopy video, giving a C&M score automatically. This 3D reconstruction allows the clinician to quantify precisely the Barrett’s area including patches or ‘islands’ not connected to the main Barrett’s area.

Dr Sharib Ali, the first author of the paper and the main contributor of this innovative technology, is part of the team working on AI solutions for endoscopy at the University of Oxford’s Department of Engineering Science. He said: “Automated segmentation of these Barrett’s areas and projecting them in 3D allows the clinician to not only report very accurately the extent of the Barrett’s area, but to pinpoint precisely the location of any dysplasia or tumour, which has not been possible up to now.”

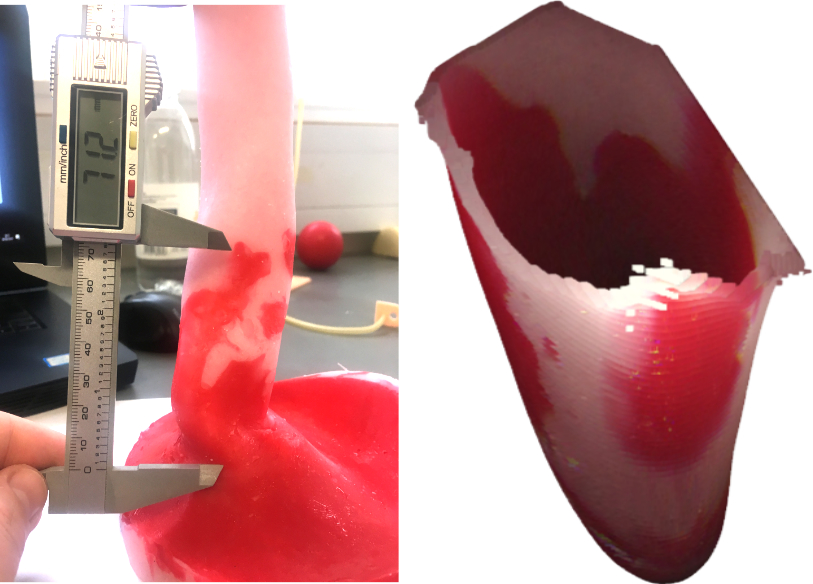

The technique was tested on a purpose-built 3D printed oesophagus phantom and high-definition videos from 131 patients scored by expert endoscopists. The endoscopic phantom video data demonstrated a 97.2 % accuracy for the C&M score measuring the length, while the measurements for the whole Barrett’s oesophagus area achieved nearly 98.4 % accuracy. On patient data, the automated C&M measurements corresponded with the endoscopy expert scores.

“With this new AI technology, the reporting system will be much more rigorous and accurate than before. It makes it much easier when the clinician sees the patient again – they know exactly where to target biopsies or therapy. And the quicker and more efficient it is, the better the experience for the patient,” Dr Sharib Ali explained.